DATE: 2018

PRODUCT: Smart Camera for Meta Portal.

ROLE: In close partnership with engineering, I led the UX of the camera, defining heuristics and cinematographic rules for the machine learning algorithm.

IMPACT: We transformed Smart Camera technology from a mechanical and distracting behavior to a natural and transparent experience. The framing movements subsided to the background, letting the people on the other side of the video call be the focus. This was the first Smart Camera solution in the market and the principal value proposition for Portal.

Video explaining the features of the Smart Camera.

Combining human intuition and AI

This story is about how design and engineering can partner to humanize technology and how we evolved our understanding of what “smart” would mean for this product.

The first challenge was understanding how we could design this Smart Camera experience.

From my side, I had no defined user journey, infinite use cases, and none of the usual interfaces, like UI or physical affordances.

I collaborated closely with Eric Hwang, a software engineer on the Portal AI team. Engineering often focuses on clear definitions of success and metrics for success. In the case of Smart Camera, where the user experience is so subjective, it was quite challenging for him to figure out how we would define what to do and how we knew we were making progress.

What is a “smart” camera?

Hypothesis #1:

Is “smart” to shoot like film professionals? What if we could adopt the best practices and mimic the skill of people that make movies?

We decided to start with an experiment. We asked multiple film editors to edit the same raw video sequences and study the results:

Directors were given the same scene, and were asked to choose the best framing from their point-of-view.

You can see in the video above that one editor chose to focus on context, keeping the rollercoaster in frame. In contrast, the other editor chose to keep a tighter frame on the girls.

And we saw this over and over in different situations.

Each editor interpreted the situation in their own way, based on their personal, subjective understanding of the scene and their own aesthetic judgment.

When we can’t have a consensus, it is really hard to train machine learning models. We can’t label each shot as “correct” or “wrong” in a broadly accepted way.

This rules out a solution based purely on AI.

Hypothesis #2:

Is “smart” to frame like a single great director? Instead of surveying the broad industry, can we mimic a single well-liked cinematography model?

So we decided to do one more experiment. We hired a famous film director, and we scripted several different situations in which people are moving around, arriving, leaving, standing up, and grouping in odd ways. We wanted to see how the director would react to these situations.

The director selected his top 3 camera operators to shoot the same scene in parallel, unaware of the scripts. The idea was that the director would be able to choose the best shot for each scene:

The output of 3 camera operators shooting the same live scene.

We saw the same patterns we noticed in the first experiment, with different operators choosing different framings for a given situation.

The cameramen struggled with the Portal constraints:

• Single POV

• Unscripted situations

• Landscape and portrait views

• Awkward views

• Chaos

And we started to challenge some assumptions.

What is a director without...

What is a director without...

• A message to convey?

• A predefined script?

• Actors?

• Control of the environment?

• Multiple takes?

• Multiple camera angles?

Answer: a live camera operator.

The gained clarity from this answer helped us refine the mental model

of how and what we were trying to design.

of how and what we were trying to design.

Update: What is a “smart” camera?

Conclusion: “Smart” is to adopt the intuition of a live camera operator.

Conclusion: “Smart” is to adopt the intuition of a live camera operator.

Some behaviors we decided to emulate:

• Camera movement physics

• Human reaction time

• Shot transitions

• Always anticipating movement

Professional camera operators had to fit in a tight space and compete with our Smart Camera.

Key takeaways

1. People prefer a predictable experience to one that fails to be magical.

We learned from the experiments that building a magical, intuitive system can be risky if you lack a confident social/environmental context.

What would happen when creating camera behaviors if you accidentally mistake a very serious conversation for a fun birthday environment? It could be very disruptive, weird, and sometimes just plain inappropriate.

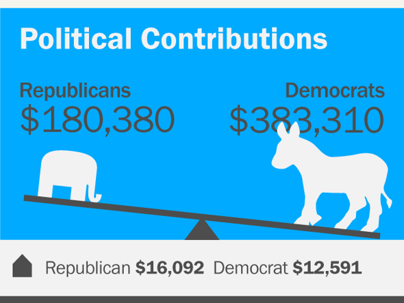

Here is a graph mapping how immersion and system intuition are related. If you know the uncanny valley problem, you will notice the similarity. Starting with some baseline level immersion which requires only basic intuition, the immersion actually gets worse before it gets better at the end.

We chose to build a more predictable experience, giving users consistency and control until we could provide the magical experience many envision in the future.

Similarly to the uncanny valley problem, feeling of immersion actually gets worse before it gets better.

2. Humans tend to accept human-like mistakes

In this scene, we see that I am walking left, and we predict I will keep doing that. When I change my opinion, we overshoot for a moment and then correct.

Probably none of you felt weird about the overshooting.

If a machine makes a mistake the same way a human does, it feels more natural, and we don’t become as aware of it.

Overshoot movement based on prediction.

But there is a nuance there. For example, as humans, we understand the difference between walking and standing up. We know that when people stand up, they have a limit on their height, so we know that the movement will end soon.

If we simply apply the prediction we use for horizontal movement, we will err like a machine, not like a human.

For this reason, we differentiated the vertical camera movement for Smart Camera. Check the difference in the video below:

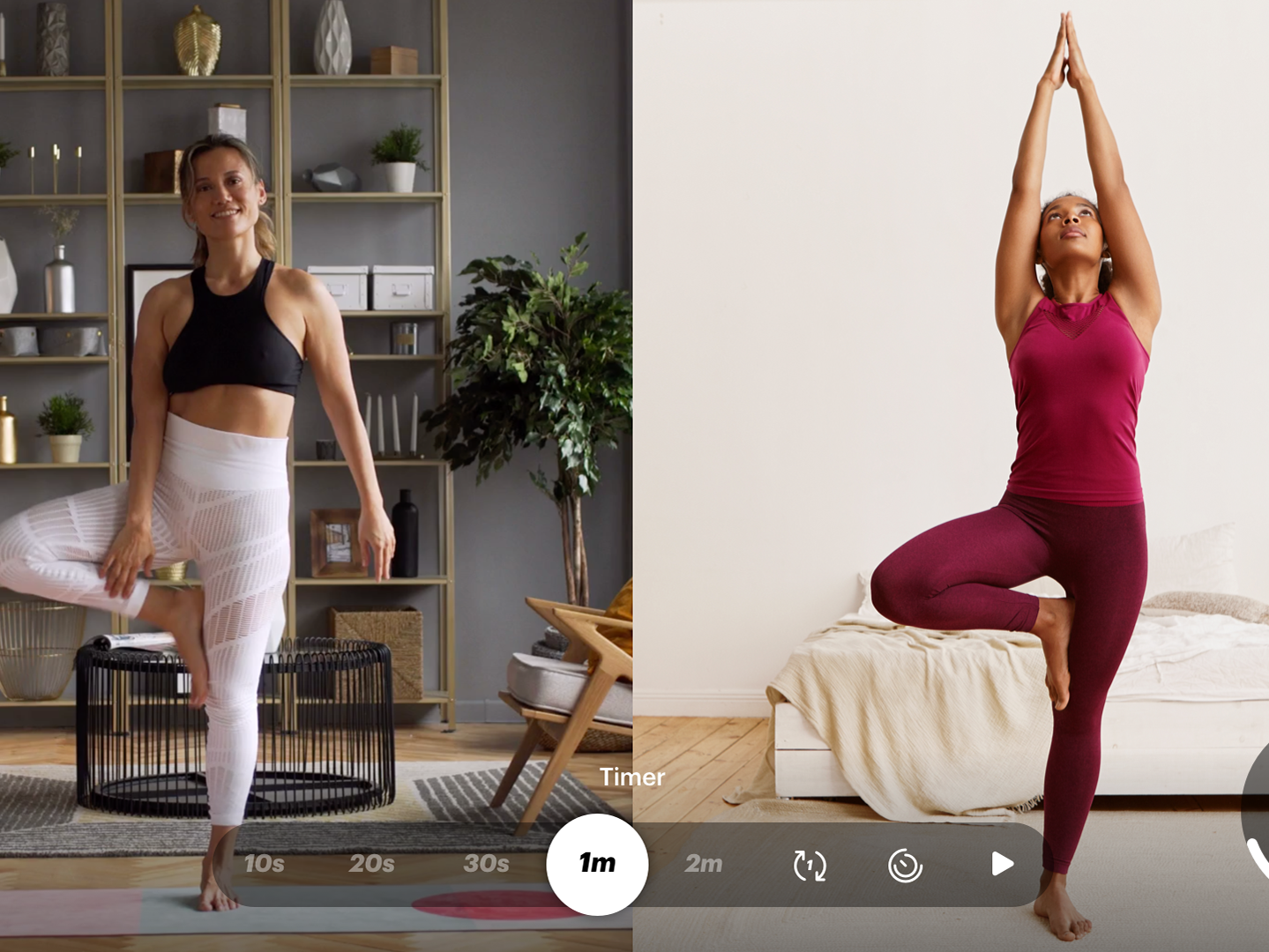

Left: overshoot like in horizontal movement. Right: differentiate vertical movement.

3. To promote human connection, smart is to be invisible

The more natural the camera feels, the less we become aware of it and the more we can focus on the people in the video, allowing people to move naturally and forget about how they are being framed.

Final result

Here’s a fun video showing the differences after one year of collaboration, from October 2017 to October 2018.

The top video is the older one, and the bottom video is the newer one.

You can see how the bottom one showcases improved camera simulation mechanics, with smoother motions, linear zoom, engagement identification, and motion prediction. The older one feels quite a bit more mechanical and very artificial feeling.

BEFORE:

• Mechanical

• Jarring

• Impulsive

• Distracting

AFTER:

• Natural

• Smooth

• Calm

• Immersive

Loved by people and by marketing

Smart Camera is the hero experience on Portal devices. It was among users' two main recall features when describing what they like on Portal or why they would recommend it to friends.

Below, some advertising pieces. Check the official website for more details.

Comparison with smartphone video calls.

A fun campaign with the Muppets for the 2019 holidays.